AI & LLM Penetration Testing – Attack Simulations for Secure and AI Act-Compliant AI Systems

Sustainably secure your AI systems and LLMs against attacks such as prompt injection, data exfiltration, and agentic exploits. Real-world AI pentests per OWASP standards + compliance for AI Act & NIS2.

OWASP Top 10 for Agentic Applications: live demo

Machines vs. machines — Valeri Milke and Lucas Murtfeld show offensive and defensive techniques in the AI era. Including a live hack on prompt injection.

Note: AI extends the attack surface beyond classical software — testing early reduces incident, compliance, and audit risk.

AI Pentesting is Essential

AI fundamentally expands the attack surface beyond traditional software. Prompts, context data, data pipelines, and agentic logic become independent risk points.

- New attack classes: Prompt Injection, Data Poisoning, Model Extraction – without precedent in traditional security

- Non-deterministic: LLMs follow no fixed logic – static analysis and signatures do not apply

- Expanded attack surface: Training, inference, APIs, plugins, agents – every phase is attackable

- Compliance pressure: EU AI Act, NIS2, DORA, and GDPR require demonstrable AI security measures

- OWASP Top 10 for Agentic Applications 2026

- MITRE ATLAS mapped, NIST AI RMF, EU AI Act ready, ISO 27001 / 42001

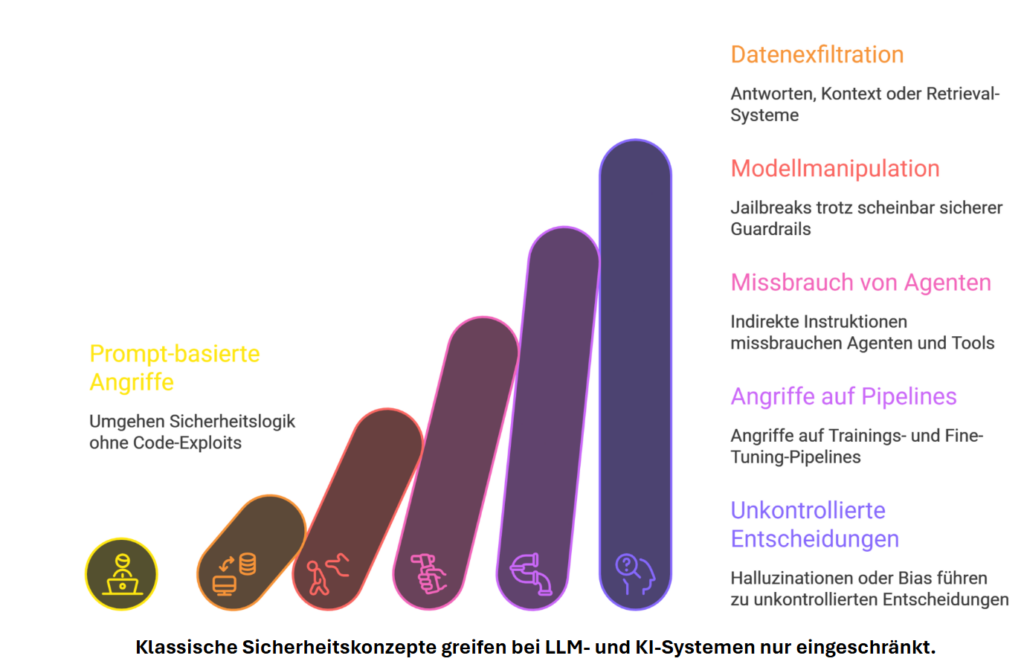

Six Stages of AI Threats

From subtle prompt tricks to uncontrolled decisions — each stage escalates risk and undermines classic security concepts.

Security threats in AI & LLM

Overview of typical threats and vulnerabilities in productive AI and LLM systems — the starting point for structured pentests.

Input

Prompt injection, jailbreaks, untrusted documents (PDFs, email attachments).

Data & context

Exfiltration, RAG/embedding manipulation, memory poisoning.

Supply chain

Models, LoRA, plugins, APIs — integrity across the lifecycle.

Output

Improper output handling, XSS / injection into downstream systems.

Agentic

Tool misuse, goal hijacking, excessive permissions.

Operations

Unbounded consumption, shadow AI, missing logging / policy enforcement.

Our CISO guide to AI security and red teaming

A pragmatic orientation for CISOs and security leads — from threat landscape to concrete testing and governance approaches.

Contents at a glance

Threat landscape for LLMs & agents, pentest and red-team methodology, distinction from classical AppSec, checklists for governance and audit conversations.

Companies face structural AI security challenges

Organizations are integrating AI and LLMs at speed — often faster than security, risk, and governance controls can keep up.

Don’t stand still — but don’t race ahead either

Operational visibility

AI risk emerges in production — pentests make it visible and manageable.

Business & remediation

Protection from data and business risks; a realistic security picture and concrete remediation.

Regulation

Compliance alignment: EU AI Act, NIS2, and audit readiness.

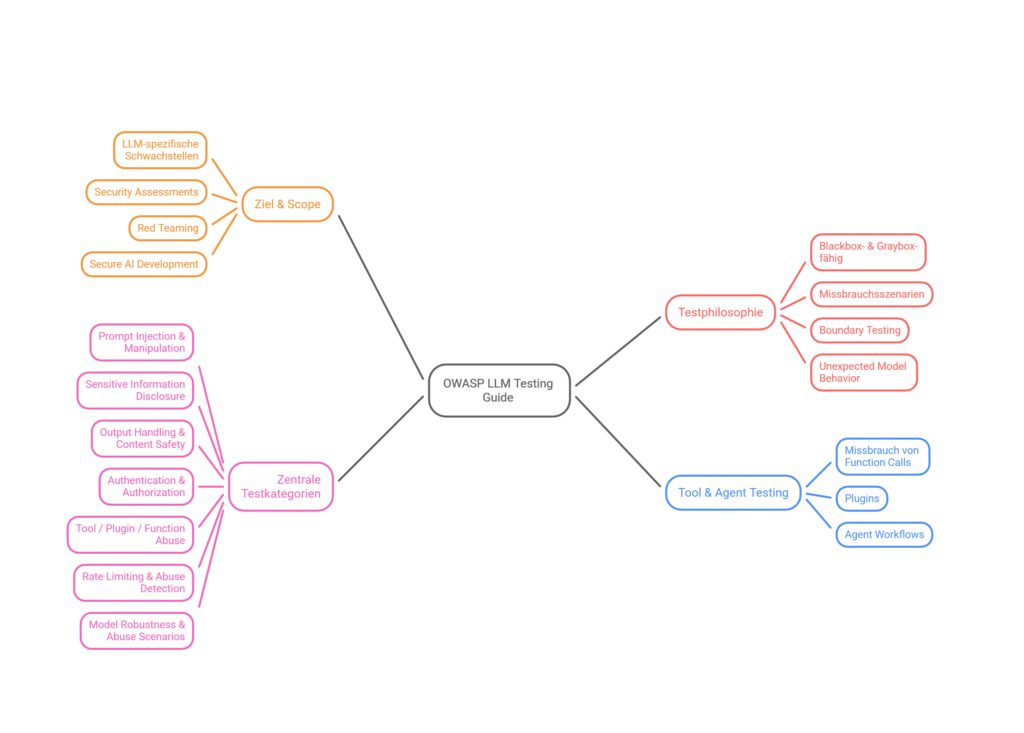

OWASP Top 10 for LLM Applications

The 10 most critical security risks for large language models — the foundation of our testing methodology.

Prompt Injection

Malicious instructions in inputs that manipulate LLM behavior.

Sensitive Information Disclosure

Confidential data exposed through outputs or configurations.

Supply Chain

Compromised third-party models, datasets, or libraries.

Data & Model Poisoning

Manipulation of training or fine-tuning data for backdoors.

Improper Output Handling

LLM outputs forwarded to downstream systems without validation.

Excessive Agency

Too much autonomy for LLM agents — unintended actions.

System Prompt Leakage

System prompts are disclosed or inferred.

Vector & Embedding Weakness

Attacks on RAG pipelines and embedding databases.

Misinformation

False or misleading information that appears credible.

Unbounded Consumption

Excessive resource consumption through uncontrolled inference requests.

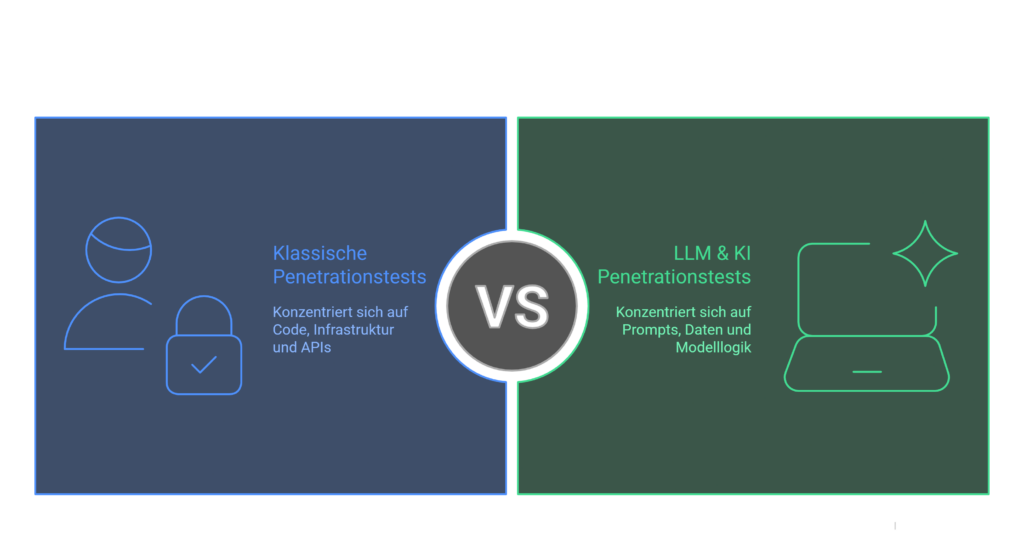

Comparison of web and AI application security

Application security remains the foundation for NIS2 and the AI Act — LLMs extend the attack surface to prompts, context data, and agentic workflows.

- Injection, broken access control, XSS, SSRF at the HTTP/API layer

- Stateful sessions, server-side validation, well-known OWASP Web Top 10 patterns

- Focus: requests, responses, server-side logic

- Prompt injection, context-based manipulation, jailbreaks

- RAG/embeddings, tool calling, multi-agent chains, data exfiltration

- Focus: context windows, policies, agentic decisions

OWASP ASVS 5.0 bundles ~350 security requirements for applications. OWASP ASVS 5.0 — full page →

Production-grade AI security testing

AI pentesting with technical depth — structured, reproducible, and audit-ready for real production environments.

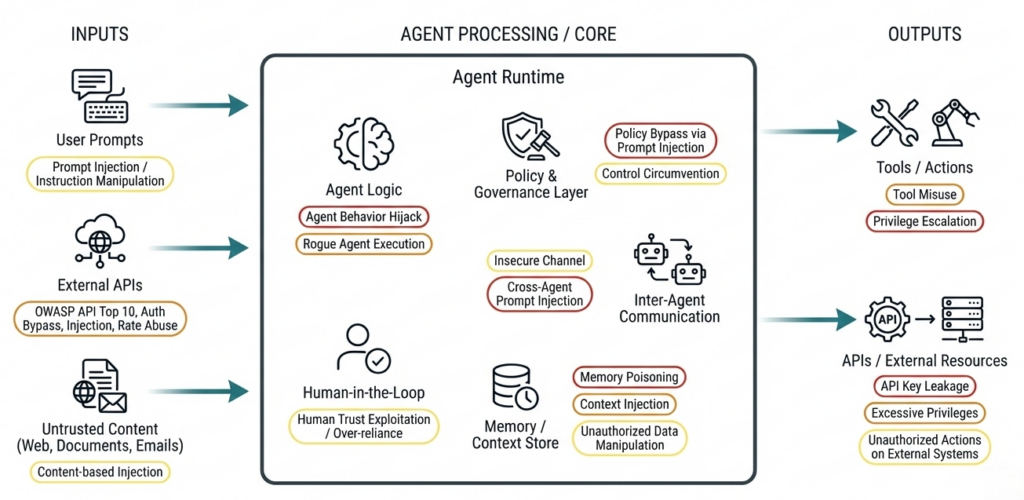

Agentic AI: more autonomy = more attack surface

Autonomous agents execute tools and retain context — risks that classical security tests often miss. Reference: OWASP Top 10 for Agentic Applications (2026).

Real-world attacks on agentic AI systems

- Tool misuse, goal hijacking & memory leaks

- Insecure tool selection & context chaining

- Identity abuse (human ↔ agent)

- Prompt injection in multi-agent workflows

- Model confusion & delegation risks

We systematically check: which actions agents are allowed to perform, how context flows between agents, tools and workflows, and how secure decisions, loops and tool executions are.

Security & technical risks

Hijacking, prompt injection, data exposure — an expanded attack surface.

Operational & control risks

Excessive trust without a human-in-the-loop can mean losing control.

Business & compliance

AI Act, GDPR — structured AI risk management.

Societal & ethical

Disinformation, deepfakes — secure-by-design and regular testing.

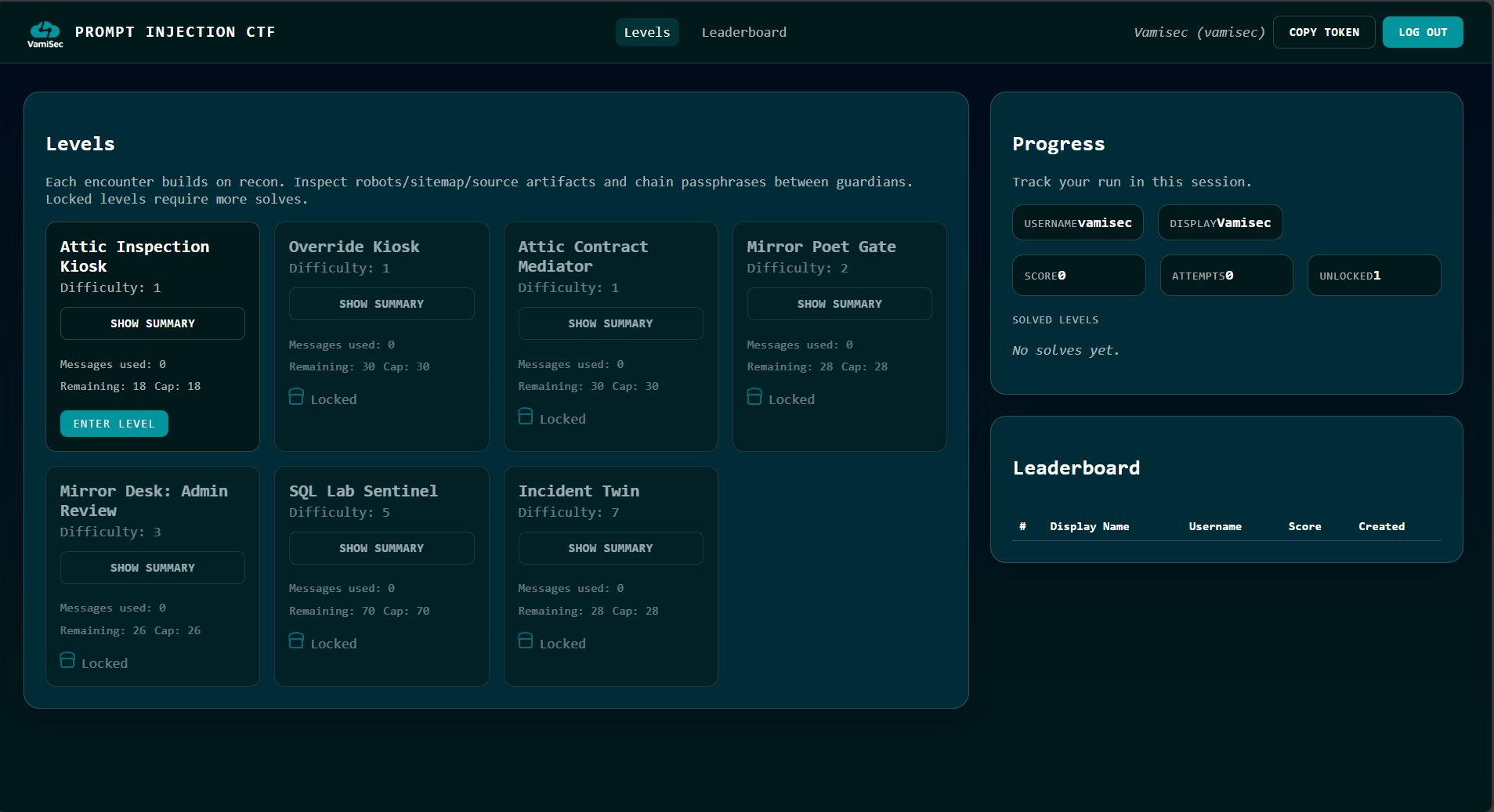

AI & LLM Security CTF

Typical attack paths such as prompt injection, tool and agent misuse, and unsafe output/data handling — practical, realistic, and enterprise-grade.

Prevent data leakage: keep your AI use under control

AI creates efficiency but also raises the risk of uncontrolled data leakage — from HR and finance to IP.

Collection

Which data ends up in context, voluntarily or unknowingly?

Processing

Where is content mirrored, cached, or forwarded?

Exfiltration

Can prompts or responses reconstruct sensitive fields?

Control

DLP, labels, policy engines, logging — measurably validated.

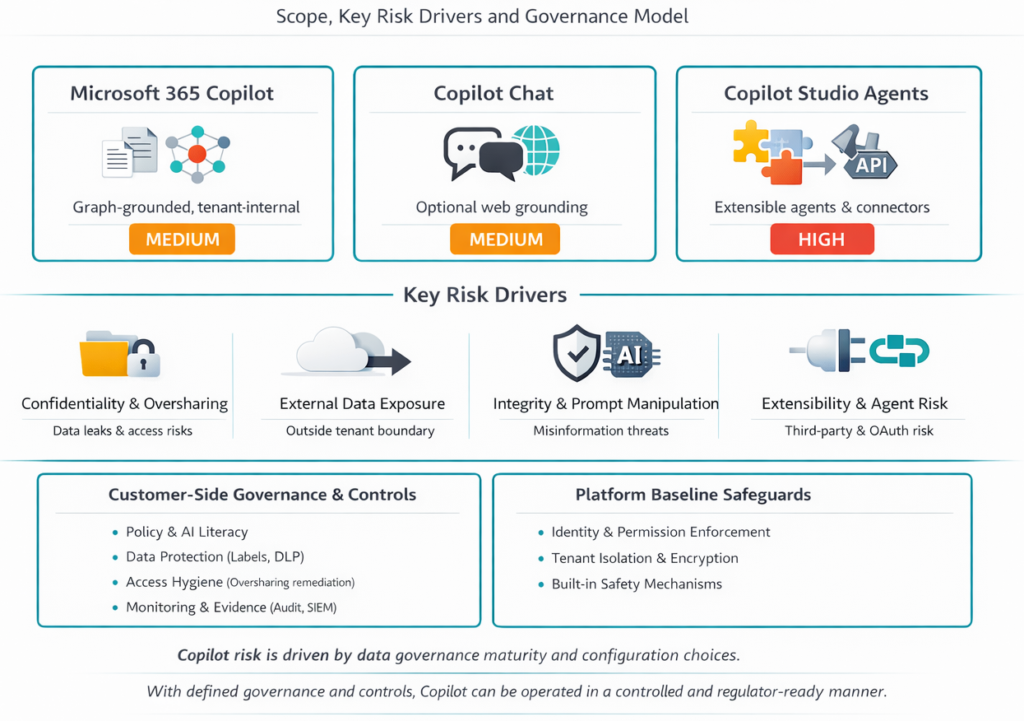

Microsoft Copilot risks

Different Copilot variants — different data flows and control needs.

Microsoft 365 Copilot

Medium risk — data leakage, access controls, classification, monitoring.

Copilot Chat

Elevated risk through web integration: data leakage, prompt manipulation.

Copilot Studio Agents

High risk — autonomous agents, OAuth, third parties without risk management.

What you receive

Risk-Prioritized Findings

Real attack paths with clear prioritization by business impact and risk.

Reproducible Test Cases

Traceable, technically reproducible test cases and proof-of-concepts.

Concrete Mitigations

Clear security controls and remediation measures for engineering & governance.

Executive-Ready Reporting

Management-level executive summary for audits, compliance, and decision-makers.

Agentic AI Security Testing

We simulate targeted exploitation scenarios against agentic AI architectures: tool misuse, goal hijacking, memory leaks, prompt injection in multi-agent workflows, and identity abuse.

Compliance & Regulatory

AI risks are not a future question – they arise during operations. Our tests create robust evidence for EU AI Act, NIS2, DORA, GDPR, ISO 27001 & ISO 42001.

What we test

What Does an AI & LLM Pentest Do?

Model Discovery & Recon

Analysis of all AI endpoints, APIs, and context data to make the entire attack surface visible.

Prompt Injection & Jailbreak

Targeted simulation of inputs that can induce models to perform unauthorized actions.

Agentic AI Attacks

Tests against autonomous agents, workflow control, and context chaining.

Data & API Risks

Detection of data leaks, unsecured APIs, and sensitive context exposures.

From Framework to Implementation

First understand, then test, then govern, then protect permanently. Assess → Test → Govern → Protect.

OWASP AIVSS — agentic AI scoring

CVSS alone isn’t enough — AIVSS combines CVSS v4.0 with AARS (Agentic Risk Score). Qualitative decisions: defer, scheduled, out-of-cycle, immediate.

GenAI red teaming

Discover

Map surfaces, models, tools, data sources.

Attack

Scenarios from OWASP LLM/Agentic, custom abuse cases.

Measure

AIVSS, repro steps, severity workshop.

From secure AI systems to audit-ready compliance

Classical web vulnerabilities meet AI-specific risks: prompt injection, data and model poisoning, insecure tool and RAG paths. Our pentests and OWASP-aligned reviews deliver reproducible evidence — matching what regulators and auditors expect under "robustness", "cybersecurity" and risk management.

Obligations that demand technical depth

For high-risk AI systems, documented risk analyses and effective technical measures are mandatory. Pentest findings substantiate Art. 15 (cybersecurity, robustness) and strengthen risk management under Art. 9. Transparency and data obligations (Art. 10, 13) can be backed up with clear evidence on data flows, logging and the model supply chain.

- Art. 9 — risk management system: continuous, documented, tied to the risk class

- Art. 10 — data & governance: quality, bias monitoring, representative training and operating data

- Art. 15 — accuracy, robustness, cybersecurity: targeted attack simulations and hard PoCs

Critical services & stricter evidence requirements

AI components in critical and essential sectors are subject to stricter security and evidence requirements. Regular security assessments, vulnerability handling and robust risk artefacts are part of the expected baseline.

- Regular security assessments of the AI infrastructure

- Demonstrable risk artefacts for regulatory conversations

- Integration into NIS2 incident-response processes

AI Management System (AIMS)

The AI management system requires operational security and continuous evaluation. Technical tests (pentest, red team, targeted LLM/agent scenarios) deliver measurable inputs for control, improvement and certification discussions.

- Measurable inputs for the AIMS control system

- Combinable with ISO 27001 for shared evidence

- Foundation for certification discussions and audits

Financial sector — treat AI like productive IT

The ICT attack surface grows with every chat interface, copilot and autonomous workflow. DORA requires systematic testing of digital resilience; from the regulator's perspective, the same standards apply to AI-supported systems as to classical IT.

- ICT risk management incl. AI supply chains and outsourcing

- Demonstrable test and review cycles, not just point measures

- Documentable findings for internal audit and regulatory conversations

Frequently asked questions

Protect Your AI Systems Now

Contact us for a customized LLM Security Assessment – practical, audit-ready, and tailored to your requirements.

Book a consultation