Implement AI Act Compliance Efficiently – With Integrated ISMS & KIMS per ISO 27001 and ISO 42001

Efficient, secure, and legally compliant implementation of the new AI regulation. By the deadline of August 1, 2026.

EU AI Act

The EU AI Act is the first comprehensive law regulating AI in the EU. It aims to ensure the safe and transparent use of AI, minimize risks, and promote innovation. The law sets binding rules for the development, deployment, and use of AI systems based on their risk potential. The goal is to protect fundamental rights, safety, and ethical standards in the handling of AI.

For companies, the AI Act means: New obligations, clear documentation requirements, and high penalties for non-compliance. VamiSec supports you with legally compliant and efficient implementation.

4 Risk Levels of the EU AI Act

The AI Act classifies AI systems by their risk potential. The higher the risk, the stricter the requirements.

Prohibited AI systems (e.g., social scoring, manipulative techniques)

Strict requirements for documentation, risk management, and conformity (Art. 43)

Transparency obligations (e.g., chatbots, deepfakes, generative AI)

No additional obligations – voluntary codes of conduct recommended

Your AI Act Compliance at a Glance

AI Act Gap Analysis

Identifying existing gaps between your ISMS and EU AI Act requirements – from risk classification to data management and documentation obligations.

Governance Structure & AI Officer

Building clear responsibilities and decision-making pathways. On request, we provide an external vAI Officer as an independent point of contact for oversight and compliance.

Synergistic Integration Concept

Integrating the AI Management System (AIMS) into your existing ISMS – with focus on efficiency and reuse of existing structures (asset, risk, supplier management, policies, awareness, audits).

Technology & Organization in Harmony

Implementing proven security measures from ISO 27001 & ISO 42001 combined with AI Act-specific controls, training, and registry management.

Operations & Improvement

Establishing an integrated, audited, and auditable management system for continuous improvement and sustainable AI Act readiness.

AI Act & ISO 42001 Gap Analysis

After the assessment, we create a consolidated roadmap to AI Act readiness – from gap analysis to optional ISO 27001 and ISO 42001 certification.

Integrated Management System: ISMS + KIMS

ISO 27001 (ISMS) and ISO 42001 (AIMS) together form the foundation for sustainable AI Act and NIS2 compliance.

Conformity Assessment under the EU AI Act

Proof of safety, transparency, and legal conformity of AI systems. Comparable to CE marking — a mandatory certification for high-risk AI.

Technical Documentation

Complete documentation of the AI system, including training data, algorithms, and risk assessments.

Data & Quality Management

Evidence of the origin, completeness, and quality of the data used.

Risk Management Processes

Systematic analysis and treatment of risks such as bias, discrimination, erroneous decisions, or manipulation.

Cybersecurity & Robustness

Protective measures against attacks on AI models and data integrity.

Transparency & Traceability

Ensuring that AI decisions are explainable (Explainable AI).

Continuous Monitoring

Processes for tracking AI performance in operation and adapting to risks or changes.

Integrated into Your Existing Compliance Structures

Our Synergistic Approach – in 5 Steps

AI Act Gap Analysis

Identifying existing gaps between your ISMS and EU AI Act requirements – from risk classification to data management and documentation obligations.

Governance Structure & AI Officer

Building clear responsibilities and decision-making pathways. On request, we provide an external vAI Officer as an independent point of contact for oversight and compliance.

Synergistic Integration Concept

Integrating the AI Management System (AIMS) into your existing ISMS – with focus on efficiency and reuse of existing structures.

Technology & Organization in Harmony

Implementing proven measures from ISO 27001 & ISO 42001 combined with AI Act-specific controls, training, and registry management.

Operations & Improvement

Establishing an integrated, audited, and auditable management system for continuous improvement and sustainable AI Act readiness.

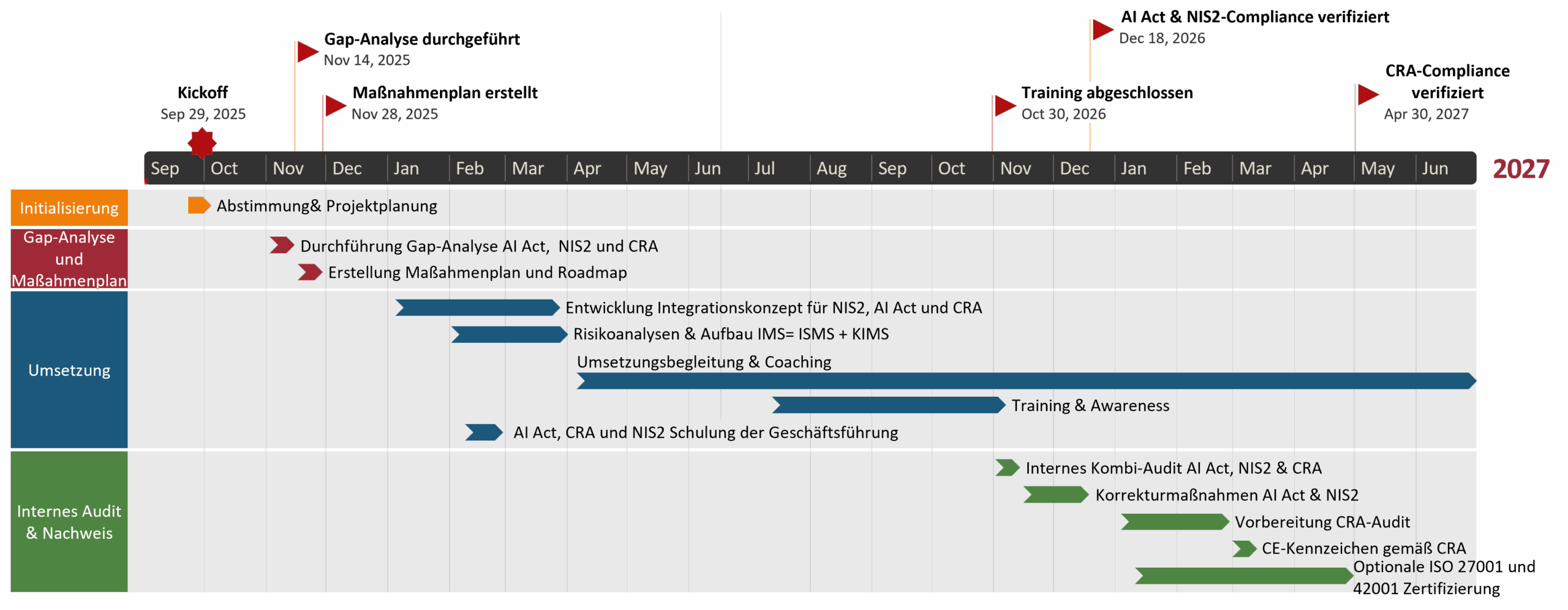

Regulatory Roadmap: AI Act, NIS2, CRA & ISO 27001

Overview of deadlines and milestones for an integrated compliance strategy.

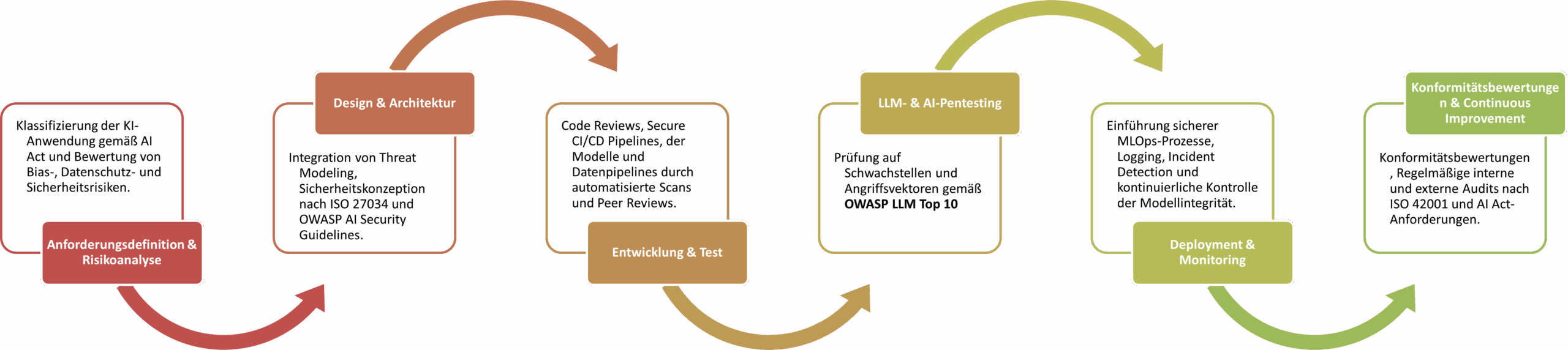

AI Secure Development Lifecycle (AI-SDLC)

Secure development lifecycle for AI systems according to the AI Act and ISO 42001.

EU AI Act – Timeline and Deadlines

GPAI Models and Their Role in the AI Act

GPAI models, such as ChatGPT by OpenAI or MS Copilot, are AI systems with general-purpose capabilities. They are characterized by their broad applicability across various tasks. The AI Act sets special requirements for transparency, documentation, and risk classification for these systems.

Role of the AI Officer

Monitoring compliance with all guidelines, standards, and laws. Coordinating risk assessment and documentation of AI systems. Ensuring data quality and traceability. Close collaboration with the CISO for unified security and compliance processes.

AI-MIG: National Implementation

With the AI Market Surveillance and Innovation Promotion Act (KI-MIG), the German government has introduced a national law for implementing the AI Act. The Federal Network Agency becomes the central AI regulatory authority in Germany.

Virtual AI Officer by VamiSec

On request, we provide an external virtual AI Officer as an independent point of contact for oversight and compliance — comparable to the vCISO model, but specialized in AI governance.

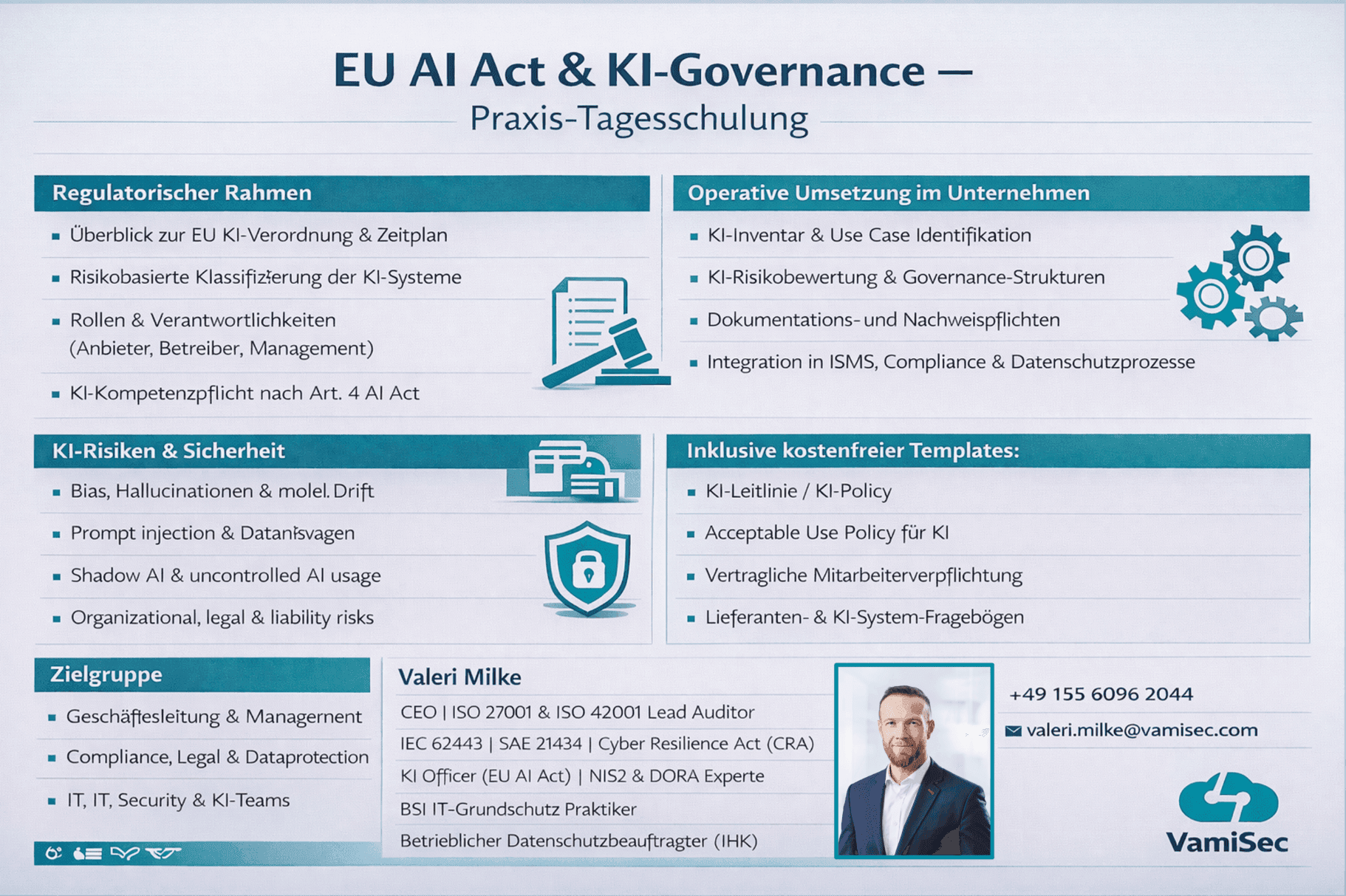

EU AI Act & AI Governance — Hands-on Day Training

This practice-oriented training provides a holistic overview of the EU AI Regulation and effective AI governance in the enterprise. Topics range from the regulatory framework and risk classification to practical implementation.

The training structure is flexibly designed and can be delivered as a compact 1-day course or adapted to your individual time requirements.

Request Training

Our Services for Your AI Compliance

Provider vs. Deployer — the Right Role Assignment

Providers are companies that develop, adapt, or market AI systems. Deployers are organizations that use and control an AI system without modifying it. Role assignment is made per AI use case and significantly determines the scope of your compliance obligations.

Frequently Asked Questions

AI Act & ISO 42001 Gap Analysis

After the assessment, we create a consolidated roadmap. Schedule your free initial consultation now.

Book Initial Consultation